Nvidia's AI Revenue Explosion and the Great Enterprise Debate

Nvidia has emerged as the undisputed winner of the AI boom, transforming technological advancement into extraordinary revenue growth. In the latest quarter, Nvidia's revenue surged to $57 billion, representing a 22% increase from the previous quarter and an impressive 62% year-over-year growth. This explosive expansion is driven primarily by insatiable demand for AI infrastructure across multiple sectors.

At the core of this remarkable story lies Nvidia's data center business, which generated $51.2 billion in revenue, marking a 66% jump from the previous year. These figures reflect not ordinary server sales, but demand for Nvidia's specialized AI chips known as GPUs. These graphics processing units function as high-performance engines specifically designed to power artificial intelligence applications, from training massive language models to executing real-time AI operations.

The demand landscape reveals an extraordinary situation where Nvidia's cloud GPUs are effectively sold out, with orders extending years into the future. Hyperscalers including Google Cloud, Microsoft Azure, and Amazon Web Services are investing billions in Nvidia-powered systems as they compete for dominance in the AI era. Industry analysts note that 2026 capacity has already been reserved, with 2027 demand rapidly accumulating.

This unprecedented demand extends beyond major cloud providers to include cutting-edge AI laboratories such as Anthropic, Mistral, and OpenAI. These organizations are developing foundation models requiring enormous computational power. Each new model generation demands more Nvidia GPUs, creating a self-reinforcing cycle of escalating demand that shows no signs of slowing.

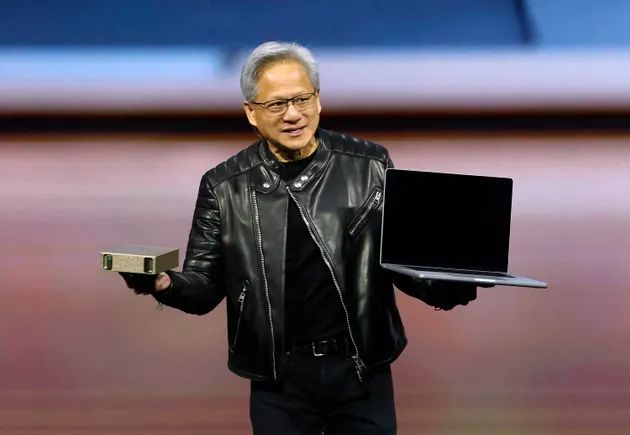

Nvidia's leadership characterizes this phenomenon as a structural transformation rather than a temporary trend. CEO Jensen Huang points to accelerating compute demand for both training and inference operations. Training represents the learning phase where AI models acquire knowledge, while inference occurs when AI systems actually respond to queries, generate code, or analyze data in real-world applications.

The company has fundamentally reinvented itself over 25 years, evolving from a gaming GPU specialist to an AI data center infrastructure powerhouse. This transformation places Nvidia at the center of modern computing's evolution away from traditional CPUs toward accelerated computing optimized for artificial intelligence workloads.

While some observers express concern about an potential AI bubble, Huang envisions a long-term transition in computational methodology. Hyperscalers are not merely experimenting at the margins but completely redesigning their data centers around Nvidia's accelerated computing model. Strategic agreements with major firms demonstrate how deeply embedded Nvidia has become in next-generation cloud platforms.

However, this infrastructure boom occurs alongside a critical challenge: many enterprises struggle to demonstrate clear return on investment from their AI initiatives. Industry research indicates that fewer than half of IT leaders report profitable AI projects, with many barely breaking even or experiencing losses. This disconnect highlights a crucial distinction between AI training and inference operations.

Training involves the expensive process of building and refining AI models, while inference represents the deployment phase where models perform actual business tasks. Currently, most enterprise spending focuses on training, but the real financial benefits emerge during inference when AI systems handle customer service, fraud detection, supply chain optimization, and other operational functions at scale.

This timing gap fuels intense debate about whether current AI investment represents a dangerous bubble or the foundation of a massive enterprise transformation wave. The infrastructure buildout resembles the early days of cloud computing, when skeptics questioned the return on investment before widespread adoption proved the technology's value.

Analysts suggest that forecasts for AI infrastructure spending will continue rising as enterprises transition from experimental projects to production deployments. The enterprise AI wave has barely begun, with most businesses still in the early stages of integrating artificial intelligence into daily operations. Once this transition accelerates, demand for AI compute resources could grow even more dramatically.

Nvidia's partnerships with cloud giants and model builders create a powerful ecosystem effect. By aligning with both platform owners like Google, Microsoft, and Oracle, and model creators like leading AI laboratories, Nvidia positions its GPU infrastructure as the standard foundation for next-generation AI applications. This strategy transforms Nvidia from a graphics company into the invisible backbone enabling the AI revolution across industries and geographies worldwide.